Before GPT-3 existed, building conversational AI in production meant assembling a pipeline from parts: an intent classifier, an entity extractor, a dialogue manager, a response generator. Each component was a separate problem. Each had its own failure modes. And they definitely didn’t talk to each other as cleanly as the architecture diagram suggested.

In 2017, I built one of those systems for a government agency — a conversational assistant handling citizen queries, backed by Rasa NLU and a custom dialogue management layer. It shipped. It ran in production. It also gave me a front-row seat to every ugly corner of pre-LLM conversational AI.

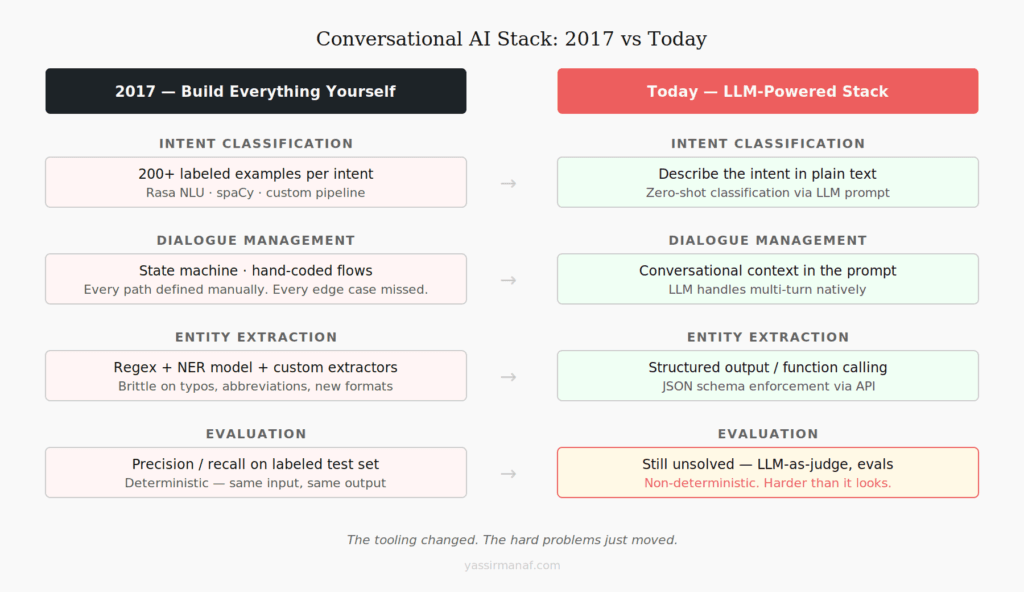

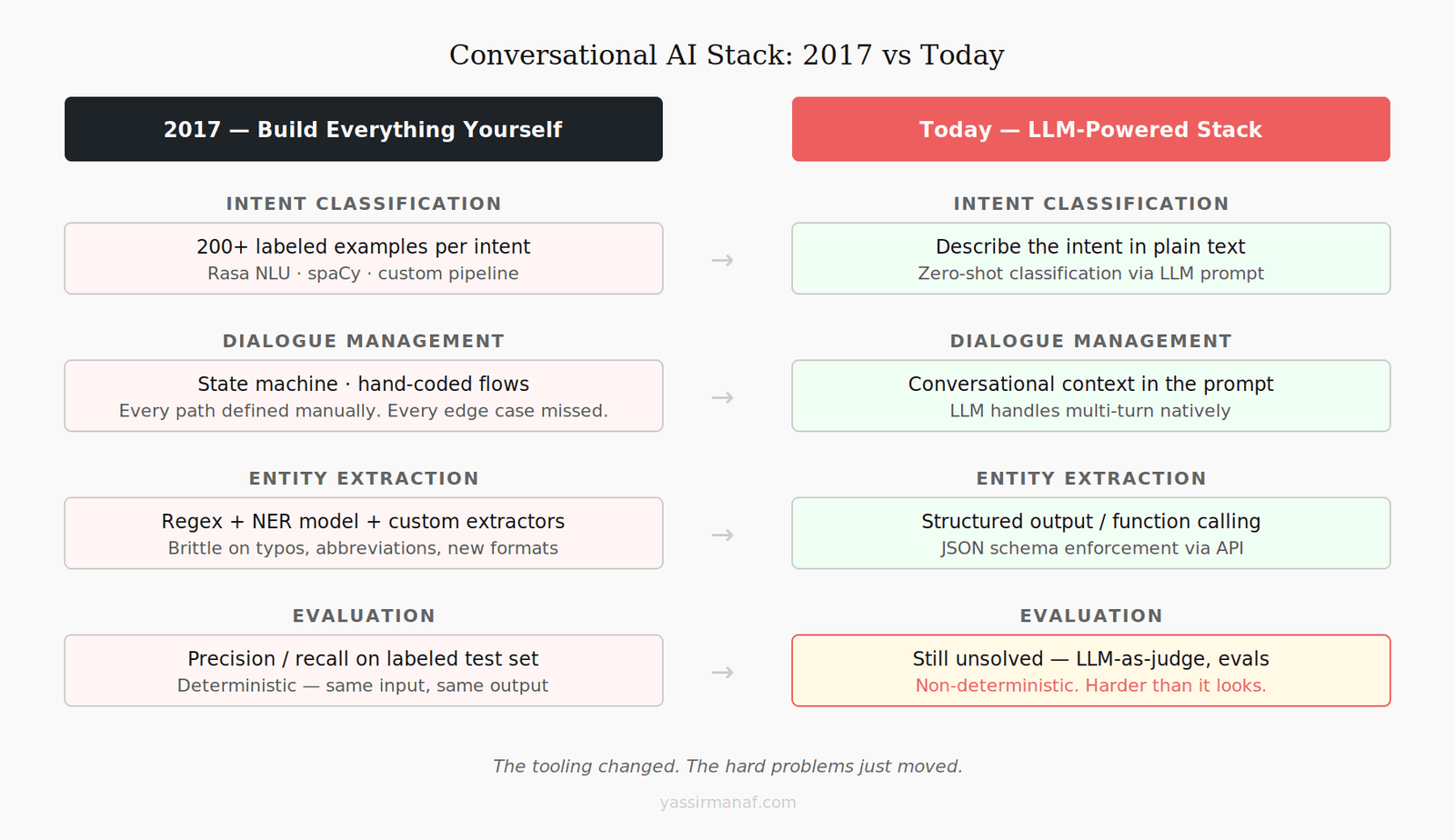

Eight years later, the tooling is unrecognizable. LLMs have made some of what we spent months building available in a single API call. The barrier to a working prototype dropped from months to days. Some of what used to be hard engineering problems are now prompt engineering problems.

But not all of them. Some just moved. And the teams treating LLMs as a shortcut past engineering discipline are running into the same walls we hit in 2017 — just faster, and with higher API bills.

Intent classification with 200 labeled examples — the pain

The first task in any conversational AI production pipeline is figuring out what the user wants. In 2017, that meant intent classification: train a model to map an utterance to one of N predefined intents.

The theory was clean. The practice was months of data wrangling.

The rule of thumb was 20-30 examples per intent. In reality, to get production-grade accuracy across a meaningful vocabulary, you needed 200+ per intent — carefully curated to cover linguistic variation: formal and informal phrasing, abbreviations, domain terminology, the way citizens actually write versus the way a product manager thinks they write.

Where did that data come from? Usually a mix of domain experts writing examples (slow, expensive, often unnatural) and bootstrapping from early production traffic — which meant deploying a half-broken system and accepting that early users would bounce. The cold start problem was genuinely painful.

Then there was the intent boundary problem. Users don’t constrain themselves to your taxonomy. They ask multi-intent questions. They ask things that don’t map to anything you’ve defined. The classifier returns a confidence score, and below a certain threshold you hit the fallback — the dead-end response that destroys trust faster than anything else. We had users explicitly complain about the fallback. By name.

Today? Zero-shot classification via LLM. Describe your intents in plain text. No labeled data, no cold start, dynamic taxonomy. For conversational AI production work, intent classification is effectively a solved problem now.

Dialogue management was the real bottleneck

If intent classification was the first hard problem, dialogue management was the one that never fully resolved.

Dialogue management decides what the system does next given conversation history: what to ask, what to collect, when to escalate, how to handle contradictions. In 2017, this meant a state machine — a hand-coded graph of states and transitions for every possible conversation path.

State machines are readable. Debuggable. Also fundamentally brittle once the conversation space gets complex.

The problem is combinatorial explosion. A conversation collecting 5 pieces of information, each of which can be provided out of order, corrected, or clarified, produces a state space that grows faster than anyone wants to maintain. Add exception handling — what happens when the user answers a question with another question? When they contradict something they said two turns ago? — and every edge case becomes a new branch.

We spent more engineering time on dialogue management than on everything else combined. And still the system surprised us in production, regularly, with conversation paths nobody had thought to model.

LLMs handle this natively. The context window is the conversation history. The model tracks what’s established, what’s still needed, and what conflicts — without a state machine. Honestly, this single change is the biggest productivity gain LLMs bring to conversational AI. What we spent weeks modeling explicitly now emerges from a well-written prompt.

What LLMs make trivially easy now

To be concrete:

Intent classification — zero-shot, no labeled data, handles out-of-vocabulary inputs.

Entity extraction — structured output and function calling let you define a JSON schema and get back typed, validated entities. No regex, no custom NER model.

Response generation — no template libraries. No 50 variants of “I didn’t understand that.” Responses are natural and contextually appropriate.

Multi-turn context — conversation history travels with every request. No custom session management.

Language coverage — multilingual support that would have required separate models per language in 2017 is free.

The 2017 stack needed a specialist for each of these. Today, a backend engineer who’s never touched NLP can have a working prototype in a day. That’s not an exaggeration — I’ve watched it happen.

What’s still hard — and always will be

The tooling changed. The hard problems moved, they didn’t disappear.

Domain expertise is not in the model. LLMs know what the internet knows. For specialized domains — regulated industries, proprietary systems, anything requiring institutional knowledge — general model knowledge is a starting point, not an answer. You still have to curate, structure, and maintain that knowledge base. That’s just engineering work that didn’t exist in 2017.

Evaluation is harder, not easier. In 2017, you ran a labeled test set, computed precision and recall, got a number. Deterministic outputs, objective metrics. LLM-based systems are non-deterministic — same input, different output across runs. Quality is partially subjective. A response can be technically accurate and still feel wrong in context. “LLM-as-judge” frameworks exist but have their own biases. Building a robust eval pipeline for a conversational AI production system is genuinely unsolved at the industry level, and most teams skip it entirely until something breaks visibly in front of a user. That’s exactly backwards.

Edge cases and adversarial inputs. In 2017 this meant unexpected intents and out-of-vocabulary inputs. Today it’s prompt injection, jailbreaks, and confident hallucinations on domain-specific queries. The attack surface changed shape. It didn’t shrink.

Cost and latency at scale. A locally-running intent classifier has near-zero marginal cost per request. LLM API calls don’t. At production scale — millions of conversations — the unit economics matter from day one, not month six.

The stack I’d use today for production conversational AI

Opinionated. Context-dependent. But here’s what I’d reach for:

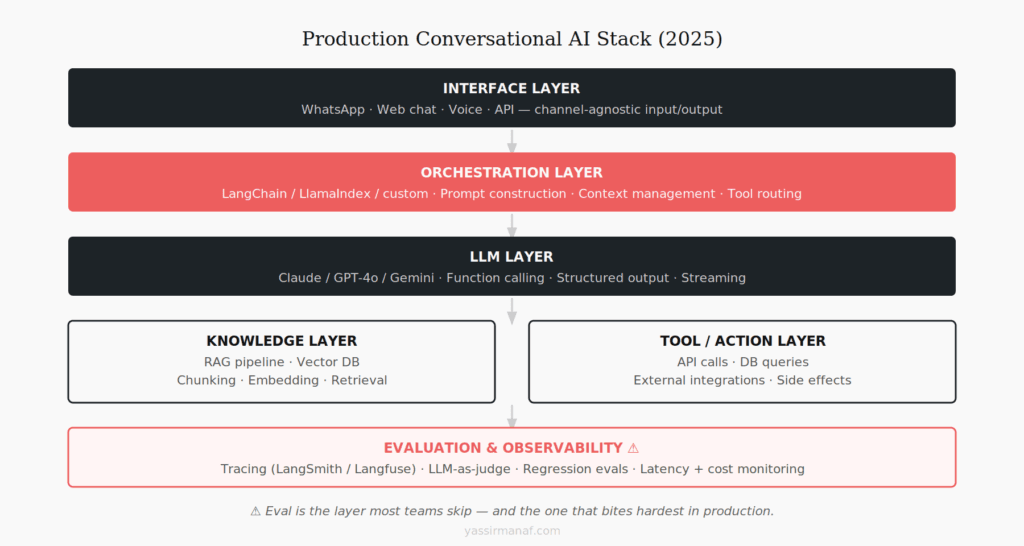

LLM: A frontier model (GPT-4o, Gemini 1.5 Pro) for complex reasoning and nuanced dialogue. A smaller, faster model for high-volume interactions where cost matters. Don’t use a Tier 1 model for everything.

Orchestration: LangChain or a lightweight custom layer, depending on complexity. LangChain abstractions help in early development and become overhead in mature systems. Evaluate honestly before committing to the full framework.

Knowledge: RAG if domain-specific knowledge is required. PostgreSQL with pgvector before a dedicated vector database. The complexity of Pinecone or Qdrant isn’t justified until you’ve actually outgrown what a well-indexed Postgres table can do.

Structured output: Function calling or structured output APIs for any typed data extraction step. Do not parse freeform LLM output with regex. I’ve seen that go wrong in ways that are genuinely hard to debug.

Evaluation: Langfuse or LangSmith for tracing from day one. Build a regression eval suite before you think you need one — the conversations that break your system in month three are the ones you didn’t test in month one.

Deployment: Stateless handlers. Conversation state in Redis. Keep the LLM layer thin and swappable — model APIs change faster than your business logic should.

The most important decision is keeping domain logic — what your system knows, what it can do, how it handles edges — separate from LLM plumbing. The LLM is infrastructure. Your domain logic is the actual product.

The tooling changed. The engineering discipline didn’t.

In 2017, building conversational AI in production required deep investment in components that LLMs now hand you for free. That investment was painful. It also built habits that I think the current generation of LLM builders is at risk of skipping.

Production systems aren’t demos. A demo works on the inputs you designed it for. A production conversational AI system has to work on every input a real user sends — including the ones designed to break it, the ones that arrive at 3am, and the ones you genuinely didn’t think of when you were writing the prompts.

The discipline that made 2017-era systems reliable — rigorous evaluation, graceful fallback, observable behavior, clean separation of concerns — applies directly to LLM systems. The teams that carry it forward will build things that hold up. The teams that treat LLMs as a shortcut past the engineering will rediscover the same lessons on a faster timeline.

Better tools. Same judgment required.

Building conversational AI in production? I’d like to hear what you’re running and what’s giving you trouble. Find me on LinkedIn.

Leave a Reply