Every architecture decision I’ve regretted had the same root cause: I added complexity I didn’t need. Not because I was careless — because I was careful in the wrong direction. Solving for hypothetical scale, hypothetical failure modes, hypothetical requirements that never showed up.

Choosing simplicity over engineering is the hardest discipline in software. It’s also the most valuable thing I’ve learned in ten years of building production systems.

The system that didn’t need microservices

Early in a major backend project at a Canadian development bank, my team inherited a system that processed loan applications. Thousands daily, with compliance requirements, audit trails, and integrations with multiple government databases. Real stakes.

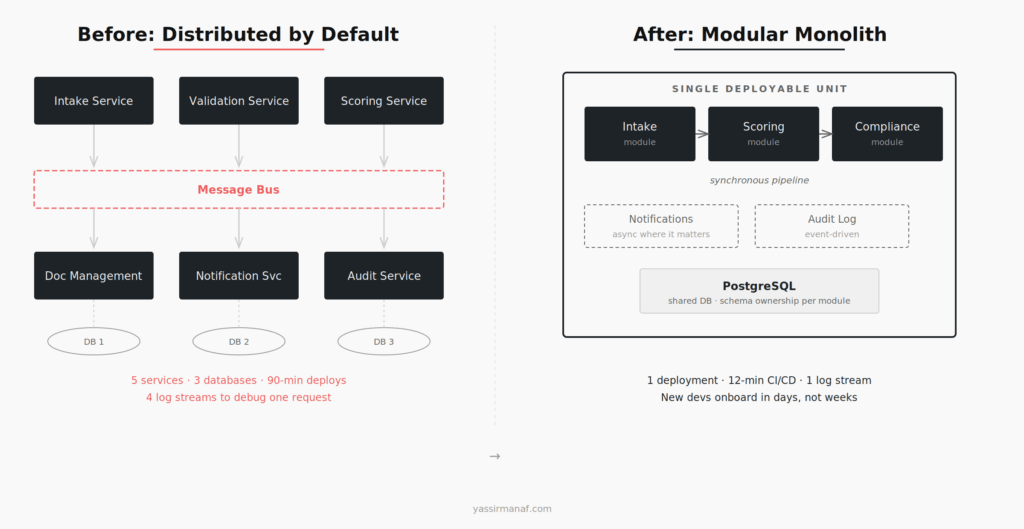

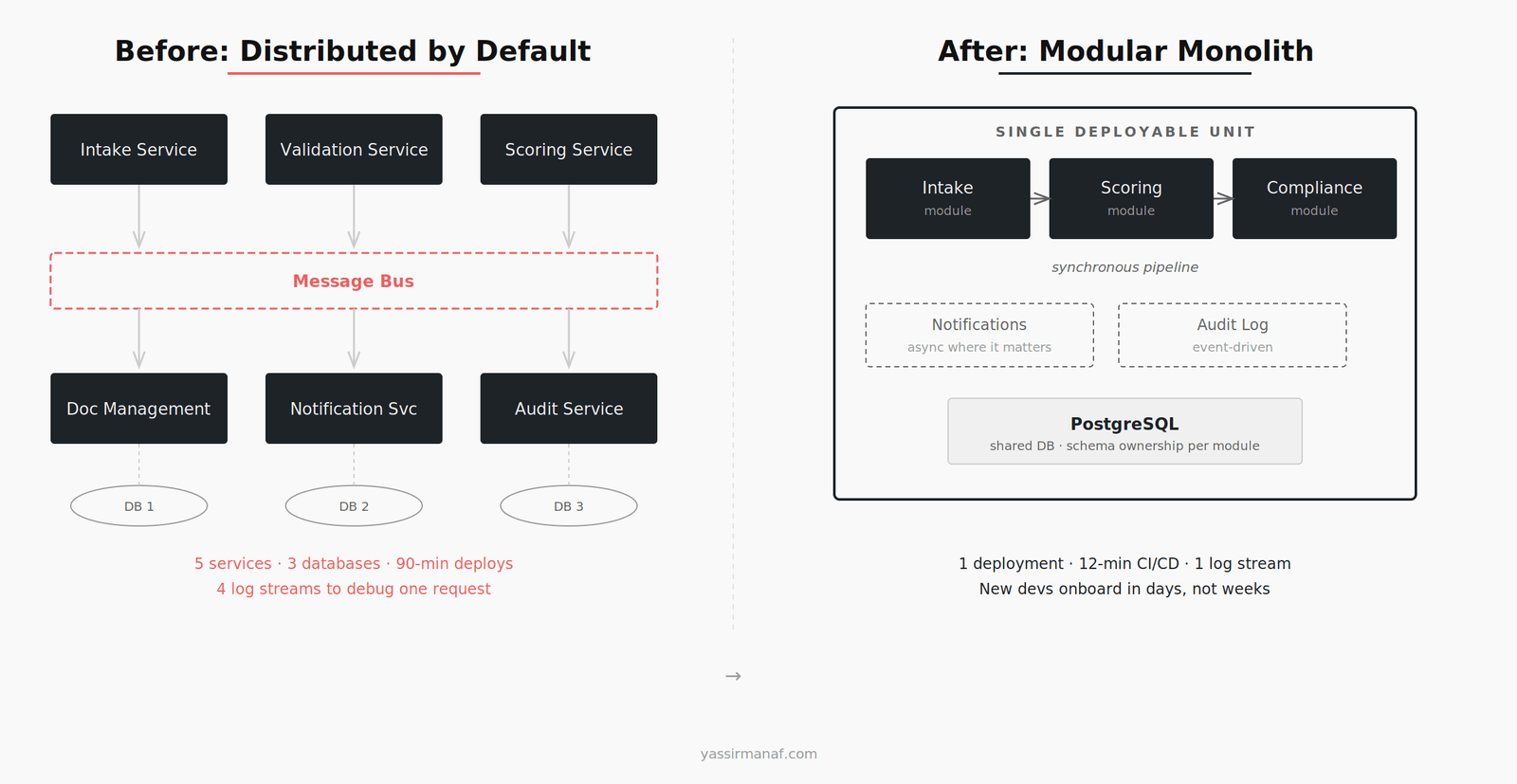

The previous architecture was a distributed setup: separate services for intake, validation, scoring, document management, and notification. Each with its own database. All communicating through a message bus. On paper, it looked like the textbook microservices diagram from a conference talk.

In practice, it was exhausting to operate.

Deployments required coordinating five services simultaneously. A schema change in one service cascaded into contract changes across three others. The message bus added latency the business couldn’t explain to applicants waiting on a response. Debugging a single failed loan application meant tracing events across four different log streams — and praying they were in sync.

The system wasn’t broken. It worked. But every change took three times as long as it should have, and every incident required a senior engineer to untangle distributed state before anyone could even start fixing things.

Why simplicity over engineering wins at banking scale

We consolidated. Not into a monolith — that word scares people unnecessarily — but into a modular monolith. One deployable unit with clear internal boundaries. Separate modules for intake, scoring, and compliance, sharing a single database with well-defined schema ownership per module.

The results were immediate and not subtle.

Deployment went from a 90-minute orchestrated ceremony to a 12-minute CI/CD pipeline. Debugging a failed application meant searching one log stream. New developers onboarded in days instead of weeks because they could follow a request from entry to exit in a single codebase — no distributed tracing required.

We kept event-driven patterns where they genuinely helped: async notification delivery and audit logging. But the core loan processing pipeline became synchronous and sequential. Because that’s what it actually was. A loan application moves through stages in order. Modeling it as an event-driven distributed system was an architectural lie we told ourselves to feel like we were building something sophisticated.

Same volume. Same compliance requirements. Same integrations. Less code, fewer moving parts, and a team that could actually maintain it.

The three questions I ask before adding complexity

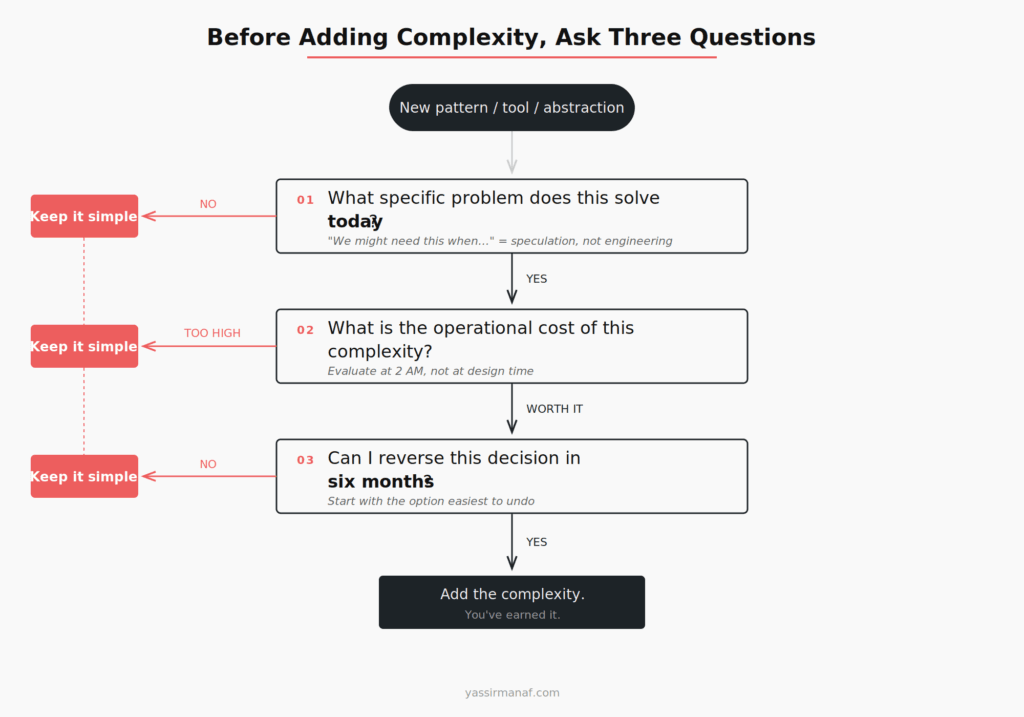

After that project, I developed a filter I still use on every architecture decision. Before adopting any pattern, framework, or abstraction that adds complexity, I ask three questions.

1. What specific problem does this solve today?

Not next quarter. Not when we scale 10x. Today. If the answer starts with “we might need this when…” — stop. You’re not engineering. You’re speculating. Speculation dressed up as architecture is still speculation.

Microservices solve a specific problem: independent deployment and scaling of components owned by different teams. If you have one team and one deployment pipeline, microservices are organizational overhead without any organizational benefit.

2. What is the operational cost of this complexity?

Every abstraction has a tax. A message bus means monitoring consumer lag, handling dead letters, managing schema evolution, and debugging async flows at 2am. A separate database per service means distributed transactions or eventual consistency — both of which add cognitive load to every feature you build afterward.

Engineers chronically underestimate operational cost because they evaluate complexity at design time, not at 2am when something’s on fire. The question isn’t whether you can build it. It’s whether you can run it, debug it, and hand it to the next person on the team.

3. Can I reverse this decision in six months?

This is the one most people skip entirely. Some decisions are nearly irreversible — splitting a database, adopting a new language, going all-in on a cloud provider’s proprietary services. Those deserve deliberate, slow thinking.

But most decisions aren’t like that. Extracting a module into a service later is straightforward if your internal boundaries are clean. Going the other direction — merging distributed services back into a single deployment — is painful and expensive. I’ve done it. It’s not fun.

Start with the option that’s easiest to reverse. In almost every case, that means starting simpler.

When complexity is actually justified

I’m not arguing against complexity. I’m arguing against unjustified complexity — the kind that gets added by default rather than by necessity. There’s a difference between a system that’s complex because it needs to be and one that’s complex because nobody stopped to ask.

Here’s when I actually reach for the more complex option:

Independent team ownership. If two teams need to deploy on different schedules without blocking each other, separate services make sense. The organizational boundary justifies the architectural one. But make sure the teams actually exist first. I’ve seen service boundaries drawn around teams that were planned, budgeted, and never hired.

Genuinely different scaling profiles. If one component handles 100 requests per second and another handles 100,000, they probably shouldn’t share resources. But measure first. Most systems don’t have this problem, and optimizing for scale you haven’t measured is just guessing with more infrastructure.

Regulatory isolation. Some compliance requirements mandate that certain data lives in separate systems with separate access controls. In banking, this is a real constraint — PCI data has legitimate reasons to live behind its own boundary. But this is the exception, not the default.

Failure isolation for critical paths. If a notification service going down shouldn’t block loan processing, separate them. But be honest about which failures actually matter. Most teams over-index on fault tolerance for non-critical paths while quietly ignoring the real risks in their core pipeline.

The pattern: every justification is a concrete, present-tense constraint. Not a future possibility. A problem you can point to right now.

The anti-pattern is building for scale you’ll never reach

The industry has a deep bias toward complexity. Conference talks celebrate distributed systems. Job postings list microservices and Kafka as requirements for apps serving a few thousand users. Technical blogs showcase elaborate architectures that would be impressive — if the system actually needed them.

Nobody gets on stage to say: “We built a straightforward application with a PostgreSQL database and it handles everything the business needs.” But that’s the reality for most companies. Most systems don’t need event sourcing. Most teams don’t need Kubernetes. Most products don’t need a service mesh.

The irony is that choosing simplicity over engineering requires more discipline than the alternative. Anyone can add another service, another layer, another abstraction. It takes experience — and some confidence — to say: this is enough. This solves the problem. We’re done here.

I’ve spent a decade building production systems. The ones I’m proudest of aren’t the most sophisticated. They’re the ones still running, still maintainable, and still understood by the teams that own them — years after I moved on.

That’s what simplicity buys you. Not a simpler system. A system that lasts.

What’s the most over-engineered system you’ve inherited? I’d like to hear how you approached it. Find me on LinkedIn.

Leave a Reply